The Most Important AI Project Last Month Wasn’t a New Model

Andrej Karpathy released a 630-line script that runs 100 experiments overnight without human input. The pattern it demonstrates works for founders too — no ML required.

On March 7, Andrej Karpathy published a project called autoresearch to GitHub. Within days it had 21,000 stars and 8.6 million views on his announcement post — numbers that usually signal hype. This time they pointed at something real.

The AI community’s excitement was about the ML application — an agent that autonomously improves a language model training script overnight. But there’s a more important story underneath it: the pattern Karpathy used works on almost any problem where you can measure success. Founders run into that combination more often than they might think.

What Karpathy Actually Built

Autoresearch gives an AI agent a single training script and a simple instruction: make it better. The agent modifies the code, runs a five-minute experiment, checks one metric, and keeps the change if it improved things. If it didn’t, it discards and tries something else. Then it repeats — all night.

At roughly 12 experiments per hour, the agent runs about 100 experiments while you sleep. Karpathy left his running for two days: 700 autonomous changes, 20 additive improvements, an 11% efficiency gain. Shopify CEO Tobi Lutke applied the same approach to an internal model and got a 19% performance improvement from 37 experiments.

No human in the loop. No approvals required. Just a metric, a file it can edit, and time.

The Three-Part Pattern

The reason autoresearch transfers beyond ML is that the underlying structure is generic. Karpathy calls it the agent loop, and it has three components:

One file to edit. The agent modifies a single, well-scoped artifact. Not a whole system — one file. This keeps the scope of each experiment tight and the results interpretable.

One measurable metric. The agent needs to know whether it improved or not. In autoresearch, that’s validation loss — lower is better, objectively testable. The metric doesn’t have to be complex. It just has to be real.

A fixed time limit per experiment. Each run is capped. This prevents the agent from running forever on a single attempt and forces it toward volume of experiments over depth.

That’s the full structure. Hypothesis → test → measure → keep or discard → repeat. The reason it works is the same reason A/B testing works: given enough iterations and a clear signal, the loop finds improvements that humans would miss or wouldn’t have patience to find.

What This Looks Like for Founders

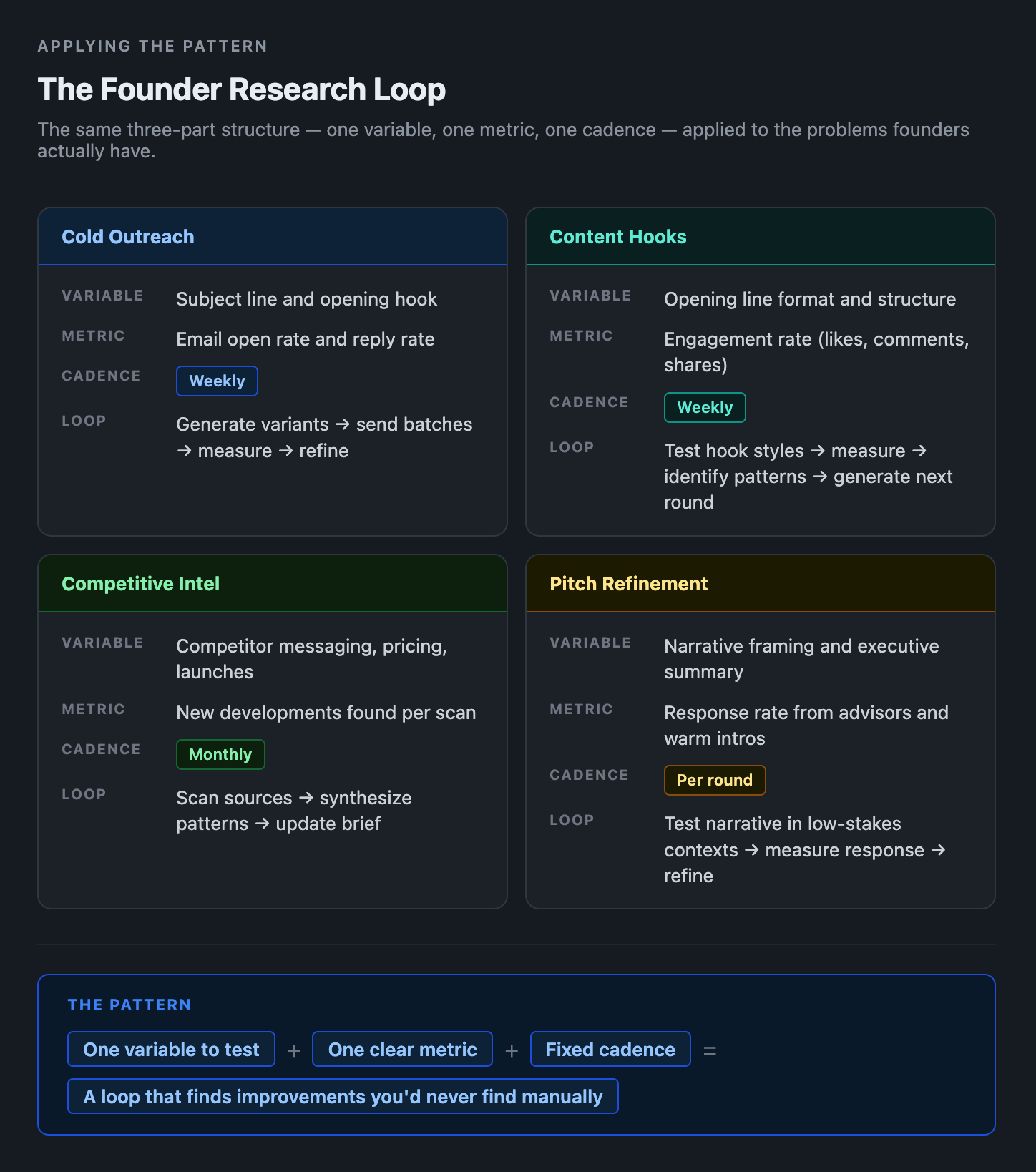

You probably don’t train language models. But you do have problems with a measurable outcome and a variable you can test — which is all the loop requires.

Cold outreach. Your email open rate is the metric. Your subject line is the file. Generate 10 variants, send small batches, measure open rates, identify what’s working, generate the next round of variants informed by what performed. You’re not guessing anymore — you’re running the loop. Your outreach platform — whatever you’re using to send and track emails — gives you the measurement layer; Claude Code running on a schedule handles the generation and analysis.

Content hooks. Your LinkedIn or Twitter engagement rate is the metric. Your opening line is the file. Test formats systematically — question hooks vs. data points vs. provocative statements vs. narratives. After a few weeks of deliberate iteration, you stop guessing what works for your audience and start knowing.

Competitive monitoring. Your situational awareness is the metric (harder to quantify, but trackable as “new developments found”). A scheduled agent monitors competitor websites, job postings, and public announcements, synthesizes patterns, and updates a running brief. Each run refines what it looks for based on what turned out to be significant.

Pitch refinement. Your response rate from advisors or warm intros is the metric. Your executive summary is the file. Test different narrative framings in lower-stakes contexts before you’re in front of investors. The loop surfaces which version of your story lands.

What’s Realistic Right Now

I'll be honest: Karpathy's ML loop is tighter than what most founders can build today. The feedback signal in ML is clean — you run the model, you get a number. Business feedback is messier. Email open rates take days. Content engagement takes a week. Pitch response takes longer.

But the pattern still works — it just runs slower. The key adjustment is matching the loop speed to how fast you can get signal. Cold email optimization might run on a weekly cadence rather than overnight. Competitive monitoring might synthesize monthly rather than hourly.

Claude Code’s scheduled tasks and /loop command are the right execution layer for this today. Write the analysis logic once, schedule it to run on the cadence that matches your signal speed, and let it accumulate findings over time. The program.md concept from autoresearch translates directly: a single markdown file where you specify what to optimize for, what constraints to respect, and when to stop.

Start with one loop. Pick your highest-leverage variable with the clearest feedback signal. Build that first — not because the others aren’t valuable, but because you’ll learn more from one loop running for a month than from four loops planned and never started.

Want a step-by-step setup for your first founder research loop? Subscribe to get it when it drops.

Questions? Leave a comment below or connect on LinkedIn.